December 8, 2024

Artificial Intelligence in Architecture: The World Beyond Visual Generative Models

This story was originally published by on the Bluebeam Blog.

In 2022, the visual generative artificial intelligence (AI) tools Midjourney and DALL-E hit the scene, both letting creators input text prompts to bring wild conjurings to life as realistic renderings. According to Stanislas Chaillou, author of “Artificial Intelligence and Architecture,” AI is the latest major development in architectural technology. Although it’s easy to get swept up in the glitzy generative side, designers are finding many more ways that AI can expand creativity while saving time, money and brainpower for more rewarding tasks.

In London, for example, the Applied Research and Development Group (ARD) at Foster + Partners began applying AI and its offshoot machine learning (ML) in 2017. The group used it for models ranging from design-assist, surrogates, knowledge dissemination, business insight—and, yes, its own take on diffusion models that generates images from natural language. Los Angeles-based Verse Design tapped AI to meet aesthetic and performance criteria for a structure that recently won a 2023 A&D Museum Design Award.

But implementing AI doesn’t come without obstacles—including questions about protecting intellectual property (IP), training with appropriate datasets and defining creativity when it seems to lie with the designer of the AI script.

AI design assistance arrives

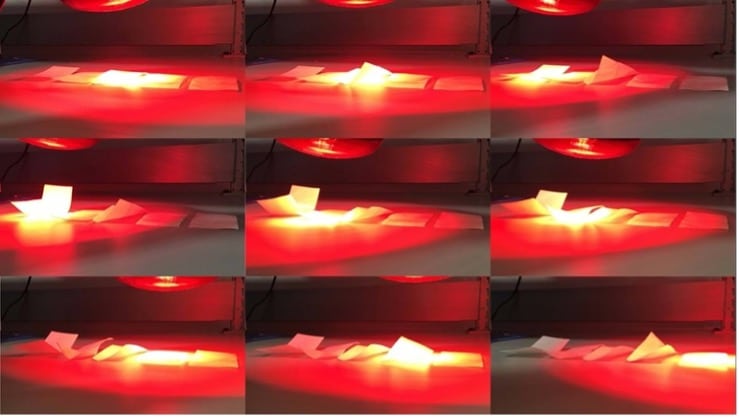

One ARD Group study involved laminates that self-deform when subject to temperature, light or humidity. The materials would enable a façade that responds differently depending on conditions to provide shading, prevent overheating or increase privacy. But to simulate the laminates’ nonlinear and unpredictable response, the group turned to ML.

“We used ML to predict how a passively actuated material would react to variable temperature changes,” said Martha Tsigkari, senior partner. “With the help of our bespoke distributed computing and optimization system, Hydra, we ran thousands of simulations to understand how thermoactivated laminates behave under varied heat conditions. We then used that data to train a deep neural network to tell us what the laminate layering should be, given a particular deformation that we required.”

Predicting material deformation was just one application. To help automate mundane tasks and turbo-power productivity, the ARD Group is working on many more ideas around AI-powered design assist tools.

Verse Design faced similar performance constraints when designing the façade of Thirty75 Tech. The designers needed to find the optimal pattern of louvers to mitigate heat gain and meet California’s Title 24 energy efficiency standards.

“The final geometries were generated parametrically with real-time simulation data,” Tang explained. “The geometries were fed back to the energy model to find and confirm the most energy-efficient combination of louver variations that met the intent of the visual expression and performance objectives.”

Extraordinary content delivered faster

Foster + Partners has also used surrogate models to replace slow analytical processes—and keep costs in check—when exploring the impact of changing design variables. These ML models train on huge datasets to deliver a prediction that is sufficiently exact and, most critically, available in real time. In early design stages, the surrogate model lets designers balance accuracy with the ability to make sound decisions sooner.

- Accruent

- Advanced Manufacturing

- Architecture

- Architecture

- Architecture - Blog

- Assembly Line Automation

- AutoCAD

- Autodesk

- Autodesk Construction Cloud

- Automotive

- BIM

- Blog

- Blog Posts

- Building Design & Engineering Services

- Building Engineering

- Building Product & Fabrication

- CAD

- CAM, CNC & Machining

- Civil 3D

- Civil Infrastructure

- Civil Infrastructure & GIS Services

- Civil, Survey & GIS

- CNC Machining Services

- Construction

- Construction

- Construction Project Lifecycle

- Consulting Services

- Consumer Products

- CPQ & Aftermarket

- CTC Software

- Data Management

- Digital Transformation

- Energy & Utilities

- Engineering

- General

- Government & Public Sector

- Industrial Machinery

- Industries

- Industry

- Industry Topics

- Infrastructure

- Inventor

- KnowledgeSmart

- Manufacturing

- Mining

- News

- Pinnacle Series

- PLM

- PLM & PDM

- Product Lifecycle Management

- Revit

- Sales and Aftermarket for Manufacturers

- Simulation & Analysis

- Software & Technology

- Software Development

- Thought Leadership

- Tips & Tricks

- Visualization

- Visualization & Reality Capture Services